As Elon University continues work to integrate artificial intelligence on campus, professors and students have varying degrees of adoption. Elon junior Emmaline Cousins, a public health major, said she frequently uses AI in her personal life but avoids using it for the majority of her school work.

“I think using it in a way of researching health topics is not the best way to use AI, because it’s a chat box telling you information based on the algorithm,” Cousins said. “But outlining things or formatting emails is a good way to use it.”

Cousins is taking the class PSY 3704: Psychology of Adolescence and Emerging Adulthood taught by Sarah Bunnell, an associate professor of psychology. In Bunnell’s syllabus for PSY 3704 she outlines the accepted use of AI for each type of assignment, which is limited to brainstorming and image generation for case studies and a self-concept map.

While most assignments in Bunnell’s class do not allow AI usage, Bunnell said this is done to make sure students are properly engaging with course content. Bunnell recognizes that AI can be a tool to facilitate learning, and said all AI use must be disclosed according to American Psychological Association guidelines.

“One of the key learning objectives is to learn ‘how do I apply really complicated frameworks and theories to myself and others,’” Bunnell said. “Doing the work of actual application and thinking about yourself when doing written reflections, that’s critical. That can’t be outsourced.”

According to Elon’s Generative AI Statement published by the Office of the Provost, Elon University sees generative AI as a tool for enhancing — but not replacing — teaching and learning.

“In line with our mission, we recognize the importance of equipping our students with the necessary skills to embrace technology for enhanced learning and engagement in their personal, professional, and civic lives,” stated the Office of the Provost.

The statement acknowledges that while AI is effective in some uses, it is not applicable in other aspects.

Elon University gives faculty the power to decide how AI will be used or discouraged in their classes, and states that these expectations should be clearly communicated to students.

Elon University has been working to integrate AI on campus since 2023, with the publication of Elon’s six AI principles. Since then, Elon has launched initiatives like Elon Q&A and more to encourage students to use artificial intelligence. One example is the Make Your Mark: AI Poster Competition, an event hosted by the Elon AI Hub, School of Communications and Love School of Business.

Shannon Duvall, a professor of computer science and Interim Associate Dean of Elon College, is accepting of AI in her classes. Duvall began her PhD in artificial intelligence in 1997, before generative AI was a popular concept. Though the understanding of AI has changed, Duvall said she is still as excited about its potential uses.

“Obviously, my students love it,” Duvall said. “It does make sense that our students are early adopters, that they’re excited about tech, that they’re fascinated by how it works, which is good because I’m fascinated by it as well.”

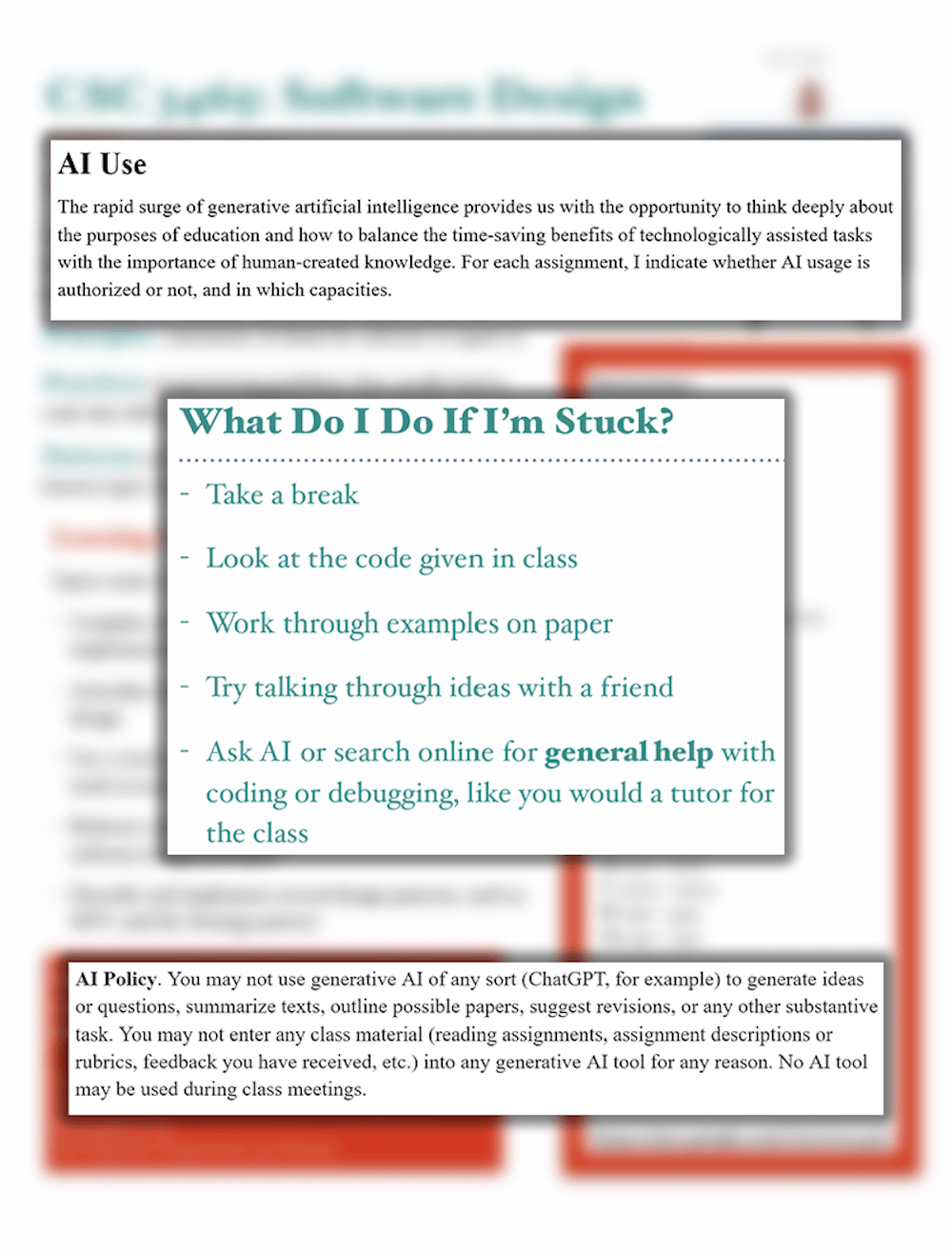

Duvall’s intro-level courses allow AI use, but she has increased in-class assessments and encourages students to use the AI for learning rather than doing. Her upper-level courses discuss how AI can be ethically used in the computer science field, and how companies may use or discourage it. In her classes, using AI to write code for you is considered cheating.

According to Duvall, much of the computer science field has been quick to adopt AI, with programs like Claude Code utilized to increase productivity.

For professors that are against AI use in their classes, like professor of philosophy Ann Cahill, AI has created a “hostile learning environment” for her students.

“My approach is that its presence has created pressure on my students to make decisions that they didn’t have to 3 years ago,” Cahill said. “They have to decide, ‘do I ask generative AI to summarize this terribly difficult philosophical article,’ rather than have struggled themselves through it.”

Though Cahill makes it clear in her syllabuses that she does not allow any AI usage in her classes, she has had to change or remove assignments that were difficult to enforce these policies on. Cahill said she has started making her tests timed and proctored in computer labs to make sure students are not using AI to cheat, a solution she said she resents.

According to Cahill, AI use in professional philosophy is regarded with suspicion and seen as unethical. Cahill said the philosophy department is drafting and developing an official departmental policy against AI use.

Cahill is the co-coordinator for the AI Critical Working Group, a grassroots team of faculty and staff that formed out of concerns of unaddressed desires to exclude AI from some learning spaces. One of the group’s first additions to campus included designation on OnTrack of AI-free classes.

“We have had meetings with the Elon Core Curriculum Committee, we’ve had discussions with the university curriculum committee, we’ve had multiple meetings with the director of AI integration,” Cahill said. “As we went about starting to advocate for these things, I have not felt a lack of institutional engagement with our work.”

Associate professor of law Caroleen Dineen said that the School of Law doesn’t have a uniform policy on AI, and that it’s based on professors. She said this is not only because law professors have differing opinions on AI, but also because each aspect of law has different possibilities for integrating AI.

“Students need to be prepared to use technology, or at least hire people who know how to use technology,” Dineen said. “It’s important for them to understand that, but they also have ethical obligations to make sure they are presenting accurate information on duty of candor of court.”

As interim associate dean of Elon College, Duvall has seen many different reactions to the integration of AI. She said many students in social studies and humanities are skeptical of AI, while many computer science students were quicker to adopt it. Even though she’s encouraged AI use, other computer science professors have chosen not to adopt AI into their classrooms.

Duvall said that it’s important not to generalize department feelings toward AI, that reactions toward it can be based on personal biases for or against AI.

“Some people are early adopters, some people are late adopters,” Duvall said. “I see more in terms of individual faculty and their individual feelings about it, more so than whole departmental feelings.”